Abstract

WebGPU exposes an API for performing operations, such as rendering and computation, on a Graphics Processing Unit.

Status of this document

This specification was published by the GPU for the Web Community Group. It is not a W3C Standard nor is it on the W3C Standards Track. Please note that under the W3C Community Contributor License Agreement (CLA) there is a limited opt-out and other conditions apply. Learn more about W3C Community and Business Groups.

1. Introduction

This section is non-normative.

Graphics Processing Units, or GPUs for short, have been essential in enabling rich rendering and computational applications in personal computing. WebGPU is an API that exposes the capabilities of GPU hardware for the Web. The API is designed from the ground up to efficiently map to (post-2014) native GPU APIs. WebGPU is not related to WebGL and does not explicitly target OpenGL ES.

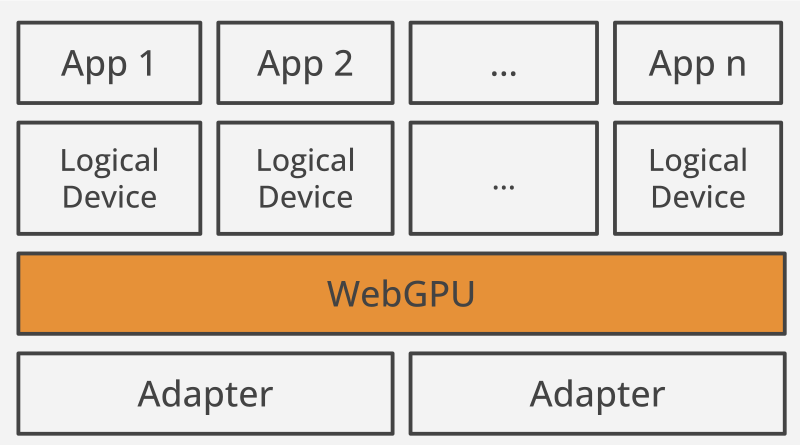

WebGPU sees physical GPU hardware as GPUAdapters. It provides a connection to an adapter via GPUDevice, which manages resources, and the device’s GPUQueues, which execute commands. GPUDevice may have its own memory with high-speed access to the processing units. GPUBuffer and GPUTexture are the physical resources backed by GPU memory. GPUCommandBuffer and GPURenderBundle are containers for user-recorded commands. GPUShaderModule contains shader code. The other resources, such as GPUSampler or GPUBindGroup, configure the way physical resources are used by the GPU.

GPUs execute commands encoded in GPUCommandBuffers by feeding data through a pipeline, which is a mix of fixed-function and programmable stages. Programmable stages execute shaders, which are special programs designed to run on GPU hardware. Most of the state of a pipeline is defined by a GPURenderPipeline or a GPUComputePipeline object. The state not included in these pipeline objects is set during encoding with commands, such as beginRenderPass() or setBlendConstant().

2. Malicious use considerations

This section is non-normative. It describes the risks associated with exposing this API on the Web.

2.1. Security Considerations

The security requirements for WebGPU are the same as ever for the web, and are likewise non-negotiable. The general approach is strictly validating all the commands before they reach GPU, ensuring that a page can only work with its own data.

2.1.1. CPU-based undefined behavior

A WebGPU implementation translates the workloads issued by the user into API commands specific to the target platform. Native APIs specify the valid usage for the commands (for example, see vkCreateDescriptorSetLayout) and generally don’t guarantee any outcome if the valid usage rules are not followed. This is called “undefined behavior”, and it can be exploited by an attacker to access memory they don’t own, or force the driver to execute arbitrary code.

In order to disallow insecure usage, the range of allowed WebGPU behaviors is defined for any input. An implementation has to validate all the input from the user and only reach the driver with the valid workloads. This document specifies all the error conditions and handling semantics. For example, specifying the same buffer with intersecting ranges in both “source” and “destination” of copyBufferToBuffer() results in GPUCommandEncoder generating an error, and no other operation occurring.

See § 22 Errors & Debugging for more information about error handling.

2.1.2. GPU-based undefined behavior

WebGPU shaders are executed by the compute units inside GPU hardware. In native APIs, some of the shader instructions may result in undefined behavior on the GPU. In order to address that, the shader instruction set and its defined behaviors are strictly defined by WebGPU. When a shader is provided to createShaderModule(), the WebGPU implementation has to validate it before doing any translation (to platform-specific shaders) or transformation passes.

2.1.3. Uninitialized data

Generally, allocating new memory may expose the leftover data of other applications running on the system. In order to address that, WebGPU conceptually initializes all the resources to zero, although in practice an implementation may skip this step if it sees the developer initializing the contents manually. This includes variables and shared workgroup memory inside shaders.

The precise mechanism of clearing the workgroup memory can differ between platforms. If the native API does not provide facilities to clear it, the WebGPU implementation transforms the compute shader to first do a clear across all invocations, synchronize them, and continue executing developer’s code.

Note: The initialization status of a resource used in a queue operation can only be known when the operation is enqueued (not when it is encoded into a command buffer, for example). Therefore, some implementations will require an unoptimized late-clear at enqueue time (e.g. clearing a texture, rather than changing GPULoadOp "load" to "clear").

As a result, all implementations should issue a developer console warning about this potential performance penalty, even if there is no penalty in that implementation.

2.1.4. Out-of-bounds access in shaders

Shaders can access physical resources either directly (for example, as a "uniform" GPUBufferBinding), or via texture units, which are fixed-function hardware blocks that handle texture coordinate conversions. Validation in the WebGPU API can only guarantee that all the inputs to the shader are provided and they have the correct usage and types. The WebGPU API can not guarantee that the data is accessed within bounds if the texture units are not involved.

In order to prevent the shaders from accessing GPU memory an application doesn’t own, the WebGPU implementation may enable a special mode (called “robust buffer access”) in the driver that guarantees that the access is limited to buffer bounds.

Alternatively, an implementation may transform the shader code by inserting manual bounds checks. When this path is taken, the out-of-bound checks only apply to array indexing. They aren’t needed for plain field access of shader structures due to the minBindingSize validation on the host side.

If the shader attempts to load data outside of physical resource bounds, the implementation is allowed to:

- return a value at a different location within the resource bounds

- return a value vector of “(0, 0, 0, X)” with any “X”

- partially discard the draw or dispatch call

If the shader attempts to write data outside of physical resource bounds, the implementation is allowed to:

- write the value to a different location within the resource bounds

- discard the write operation

- partially discard the draw or dispatch call

2.1.5. Invalid data

When uploading floating-point data from CPU to GPU, or generating it on the GPU, we may end up with a binary representation that doesn’t correspond to a valid number, such as infinity or NaN (not-a-number). The GPU behavior in this case is subject to the accuracy of the GPU hardware implementation of the IEEE-754 standard. WebGPU guarantees that introducing invalid floating-point numbers would only affect the results of arithmetic computations and will not have other side effects.

2.1.6. Driver bugs

GPU drivers are subject to bugs like any other software. If a bug occurs, an attacker could possibly exploit the incorrect behavior of the driver to get access to unprivileged data. In order to reduce the risk, the WebGPU working group will coordinate with GPU vendors to integrate the WebGPU Conformance Test Suite (CTS) as part of their driver testing process, like it was done for WebGL. WebGPU implementations are expected to have workarounds for some of the discovered bugs, and disable WebGPU on drivers with known bugs that can’t be worked around.

2.1.7. Timing attacks

WebGPU is designed to later support multi-threaded use via Web Workers. As such, it is designed not to open the users to modern high-precision timing attacks. Some of the objects, like GPUBuffer or GPUQueue, have shared state which can be simultaneously accessed. This allows race conditions to occur, similar to those of accessing a SharedArrayBuffer from multiple Web Workers, which makes the thread scheduling observable.

WebGPU addresses this by limiting the ability to deserialize (or share) objects only to the agents inside the agent cluster, and only if the cross-origin isolated policies are in place. This restriction matches the mitigations against the malicious SharedArrayBuffer use. Similarly, the user agent may also serialize the agents sharing any handles to prevent any concurrency entirely.

In the end, the attack surface for races on shared state in WebGPU will be a small subset of the SharedArrayBuffer attacks.

WebGPU also specifies the "timestamp-query" feature, which provides high precision timing of GPU operations. The feature is optional, and a WebGPU implementation may limit its exposure only to those scenarios that are trusted. Alternatively, the timing query results could be processed by a compute shader and aligned to a lower precision.

2.1.8. Row hammer attacks

Row hammer is a class of attacks that exploit the leaking of states in DRAM cells. It could be used on GPU. WebGPU does not have any specific mitigations in place, and relies on platform-level solutions, such as reduced memory refresh intervals.

2.1.9. Denial of service

WebGPU applications have access to GPU memory and compute units. A WebGPU implementation may limit the available GPU memory to an application, in order to keep other applications responsive. For GPU processing time, a WebGPU implementation may set up “watchdog” timer that makes sure an application doesn’t cause GPU unresponsiveness for more than a few seconds. These measures are similar to those used in WebGL.

2.1.10. Workload identification

WebGPU provides access to constrained global resources shared between different programs (and web pages) running on the same machine. An application can try to indirectly probe how constrained these global resources are, in order to reason about workloads performed by other open web pages, based on the patterns of usage of these shared resources. These issues are generally analogous to issues with Javascript, such as system memory and CPU execution throughput. WebGPU does not provide any additional mitigations for this.

2.1.11. Memory resources

WebGPU exposes fallible allocations from machine-global memory heaps, such as VRAM. This allows for probing the size of the system’s remaining available memory (for a given heap type) by attempting to allocate and watching for allocation failures.

GPUs internally have one or more (typically only two) heaps of memory shared by all running applications. When a heap is depleted, WebGPU would fail to create a resource. This is observable, which may allow a malicious application to guess what heaps are used by other applications, and how much they allocate from them.

2.1.12. Computation resources

If one site uses WebGPU at the same time as another, it may observe the increase in time it takes to process some work. For example, if a site constantly submits compute workloads and tracks completion of work on the queue, it may observe that something else also started using the GPU.

A GPU has many parts that can be tested independently, such as the arithmetic units, texture sampling units, atomic units, etc. A malicious application may sense when some of these units are stressed, and attempt to guess the workload of another application by analyzing the stress patterns. This is analogous to the realities of CPU execution of Javascript.

2.1.13. Abuse of capabilities

Malicious sites could abuse the capabilities exposed by WebGPU to run computations that don’t benefit the user or their experience and instead only benefit the site. Examples would be hidden crypto-mining, password cracking or rainbow tables computations.

It is not possible to guard against these types of uses of the API because the browser is not able to distinguish between valid workloads and abusive workloads. This is a general problem with all general-purpose computation capabilities on the Web: JavaScript, WebAssembly or WebGL. WebGPU only makes some workloads easier to implement, or slightly more efficient to run than using WebGL.

To mitigate this form of abuse, browsers can throttle operations on background tabs, could warn that a tab is using a lot of resource, and restrict which contexts are allowed to use WebGPU.

User agents can heuristically issue warnings to users about high power use, especially due to potentially malicious usage. If a user agent implements such a warning, it should include WebGPU usage in its heuristics, in addition to JavaScript, WebAssembly, WebGL, and so on.

Should WebGPU have Permissions Policy / Feature Policy? [Issue #gpuweb/gpuweb#3483]

2.2. Privacy Considerations

The privacy considerations for WebGPU are similar to those of WebGL. GPU APIs are complex and must expose various aspects of a device’s capabilities out of necessity in order to enable developers to take advantage of those capabilities effectively. The general mitigation approach involves normalizing or binning potentially identifying information and enforcing uniform behavior where possible.

2.2.1. Machine-specific features and limits

WebGPU can expose a lot of detail on the underlying GPU architecture and the device geometry. This includes available physical adapters, many limits on the GPU and CPU resources that could be used (such as the maximum texture size), and any optional hardware-specific capabilities that are available.

User agents are not obligated to expose the real hardware limits, they are in full control of how much the machine specifics are exposed. One strategy to reduce fingerprinting is binning all the target platforms into a few number of bins. In general, the privacy impact of exposing the hardware limits matches the one of WebGL.

The default limits are also deliberately high enough to allow most applications to work without requesting higher limits. All the usage of the API is validated according to the requested limits, so the actual hardware capabilities are not exposed to the users by accident.

2.2.2. Machine-specific artifacts

There are some machine-specific rasterization/precision artifacts and performance differences that can be observed roughly in the same way as in WebGL. This applies to rasterization coverage and patterns, interpolation precision of the varyings between shader stages, compute unit scheduling, and more aspects of execution.

Generally, rasterization and precision fingerprints are identical across most or all of the devices of each vendor. Performance differences are relatively intractable, but also relatively low-signal (as with JS execution performance).

Privacy-critical applications and user agents should utilize software implementations to eliminate such artifacts.

2.2.3. Machine-specific performance

Another factor for differentiating users is measuring the performance of specific operations on the GPU. Even with low precision timing, repeated execution of an operation can show if the user’s machine is fast at specific workloads. This is a fairly common vector (present in both WebGL and Javascript), but it’s also low-signal and relatively intractable to truly normalize.

WebGPU compute pipelines expose access to GPU unobstructed by the fixed-function hardware. This poses an additional risk for unique device fingerprinting. User agents can take steps to dissociate logical GPU invocations with actual compute units to reduce this risk.

2.2.4. User Agent State

This specification doesn’t define any additional user-agent state for an origin. However it is expected that user agents will have compilation caches for the result of expensive compilation like GPUShaderModule, GPURenderPipeline and GPUComputePipeline. These caches are important to improve the loading time of WebGPU applications after the first visit.

For the specification, these caches are indifferentiable from incredibly fast compilation, but for applications it would be easy to measure how long createComputePipelineAsync() takes to resolve. This can leak information across origins (like “did the user access a site with this specific shader”) so user agents should follow the best practices in storage partitioning.

The system’s GPU driver may also have its own cache of compiled shaders and pipelines. User agents may want to disable these when at all possible, or add per-partition data to shaders in ways that will make the GPU driver consider them different.

2.2.5. Driver bugs

In addition to the concerns outlined in Security Considerations, driver bugs may introduce differences in behavior that can be observed as a method of differentiating users. The mitigations mentioned in Security Considerations apply here as well, including coordinating with GPU vendors and implementing workarounds for known issues in the user agent.

2.2.6. Adapter Identifiers

Past experience with WebGL has demonstrated that developers have a legitimate need to be able to identify the GPU their code is running on in order to create and maintain robust GPU-based content. For example, to identify adapters with known driver bugs in order to work around them or to avoid features that perform more poorly than expected on a given class of hardware.

But exposing adapter identifiers also naturally expands the amount of fingerprinting information available, so there’s a desire to limit the precision with which we identify the adapter.

There are several mitigations that can be applied to strike a balance between enabling robust content and preserving privacy. First is that user agents can reduce the burden on developers by identifying and working around known driver issues, as they have since browsers began making use of GPUs.

When adapter identifiers are exposed by default they should be as broad as possible while still being useful. Possibly identifying, for example, the adapter’s vendor and general architecture without identifying the specific adapter in use. Similarly, in some cases identifiers for an adapter that is considered a reasonable proxy for the actual adapter may be reported.

In cases where full and detailed information about the adapter is useful (for example: when filing bug reports) the user can be asked for consent to reveal additional information about their hardware to the page.

Finally, the user agent will always have the discretion to not report adapter identifiers at all if it considers it appropriate, such as in enhanced privacy modes.